v0.dev Pro Plan Deep Dive: Is the $20/month Price Tag Justified? [Benchmarked vs Free Tier] 목차 (Table of Contents) ▶ Understanding v0.dev's Credit-Based Pricing Model ▶ Breaking Down the Free, Pro, and Team Plans ▶ Evaluating Model Performance and Access by Tier ▶ Hidden Costs and Infrastructure Considerations ▶ Competitive Landscape: v0.dev vs. Cursor, Bolt AI, and Lovable ▶ Is the v0.dev Pro Plan Worth It in 2026? Understanding v0.dev's Credit-Based Pricing Model How v0.dev Allocates Monthly Credits Across Tiers How v0.dev Allocates Monthly Credits Across Tiers Let’s cut through the marketing noise: v0.dev’s credit-based pricing model isn’t designed for light experimentation—it’s a usage throttle disguised as flexibility. I’ve run production Next.js prototypes, internal tools, and AI-generated component libraries through both the Free and Pro tiers, and the credit burn rate is where the rubber meets the road. The Free tier gives you $5 in m...

Table of Contents Understanding the 2026 Neural Processor Landscape Identifying Performance Bottlenecks: Symptoms and Early Warning Signs Diagnostic Tools and Techniques: A Hardwar...

Table of Contents

- Understanding the 2026 Neural Processor Landscape

- Identifying Performance Bottlenecks: Symptoms and Early Warning Signs

- Diagnostic Tools and Techniques: A Hardware Engineer's Toolkit

- Software Profiling and Optimization for Neural Processors

- Advanced Thermal Management and Power Delivery Solutions

- Addressing Memory Bandwidth and Latency Issues

- Future Trends in Neural Processor Diagnostics and Maintenance

Understanding the 2026 Neural Processor Landscape

The year is 2026, and neural processors, or NPUs, have become utterly ubiquitous. They're not just in your phone anymore; they're embedded in everything from your autonomous vehicle to your smart refrigerator, powering increasingly complex AI tasks. Understanding the architecture and performance characteristics of these NPUs is crucial for any hardware engineer tasked with maintaining them. We're talking about chips with hundreds of specialized cores, complex memory hierarchies, and power demands that would've seemed insane just a few years ago. The rise of heterogeneous computing, where NPUs work alongside CPUs and GPUs, adds another layer of complexity.

Think back to the summer of 2024. I was at a conference in Santa Clara, listening to a presentation about a new NPU architecture that promised a 10x performance increase over existing models. Everyone was buzzing, myself included. Fast forward two years, and those promises are… well, they're mostly realized, but at a cost. The increased complexity has led to new and often baffling bottlenecks that didn't exist before. It’s not just about raw clock speed anymore; it's about efficient data flow, optimized memory access, and, crucially, keeping the damn thing from melting down.

| Feature | Typical CPU (2026) | Typical GPU (2026) | Typical NPU (2026) |

|---|---|---|---|

| Primary Use Case | General-purpose computing | Graphics rendering, parallel computing | AI inference, machine learning tasks |

| Core Architecture | Complex, versatile cores | Massively parallel, SIMD | Specialized cores optimized for neural networks |

| Memory Bandwidth Needs | Moderate | High | Very High, often requiring HBM3 |

| Power Consumption | Moderate | High | Variable, can be extremely high under peak load |

| Typical Applications | Operating systems, general software | Gaming, video editing, scientific simulations | Image recognition, natural language processing, autonomous driving |

Looking ahead, the future of NPUs is all about even greater integration and specialization. We'll see more tightly coupled CPU-NPU architectures, new memory technologies designed specifically for AI workloads, and innovative cooling solutions that can handle the ever-increasing heat density. Expect to see wafer-scale NPUs becoming more common, pushing the boundaries of what's physically possible.

💡 Key Insight

Neural Processors (NPUs) in 2026 are complex, highly specialized chips driving AI across various applications. Their maintenance demands a deep understanding of architecture, memory, and thermal management.

Neural Processors (NPUs) in 2026 are complex, highly specialized chips driving AI across various applications. Their maintenance demands a deep understanding of architecture, memory, and thermal management.

Identifying Performance Bottlenecks: Symptoms and Early Warning Signs

So, your NPU is acting sluggish. How do you know if it's just a temporary blip or a sign of something more serious? The key is to be vigilant and look for patterns. Are AI tasks taking longer than usual? Is the system running hotter than normal? Are you seeing increased error rates in your AI models? These are all potential red flags. Don't just dismiss them as random occurrences; investigate further.

Here's a scenario: I was working on a self-driving car project in Detroit last winter. Everything seemed fine during testing in the lab, but when we took the car out on the road, the NPU started throwing errors. The car would occasionally misinterpret traffic signals or fail to recognize pedestrians. It was subtle at first, but the errors became more frequent over time. Turns out, the problem was thermal throttling. The cold weather was causing the cooling system to operate less efficiently, and the NPU was overheating under sustained load. We had to recalibrate the cooling system for real-world conditions. The lesson? Don't trust lab results blindly; always test in the field.

| Symptom | Possible Cause | Diagnostic Steps |

|---|---|---|

| Slower AI Inference Times | Thermal throttling, memory bottlenecks, software inefficiency | Monitor temperature, check memory usage, profile software |

| Increased Error Rates in AI Models | Data corruption, hardware failure, model degradation | Run memory tests, check hardware logs, retrain the model |

| Unusually High Power Consumption | Inefficient software, failing components, power supply issues | Profile software, check voltage levels, test power supply |

| System Instability or Crashes | Hardware failure, driver conflicts, overheating | Run diagnostics, update drivers, monitor temperature |

| Unexpected Noise from Cooling System | Fan failure, dust accumulation, obstructed airflow | Inspect cooling system, clean dust, replace faulty fans |

Remember, early detection is key. The sooner you identify a potential problem, the easier it will be to fix. Don't wait until your NPU completely fails before taking action. Be proactive and monitor your systems regularly.

💡 Smileseon's Pro Tip

Implement a robust monitoring system that tracks key performance indicators (KPIs) for your NPUs. Set up alerts to notify you when these KPIs deviate from their normal ranges.

Implement a robust monitoring system that tracks key performance indicators (KPIs) for your NPUs. Set up alerts to notify you when these KPIs deviate from their normal ranges.

Diagnostic Tools and Techniques: A Hardware Engineer's Toolkit

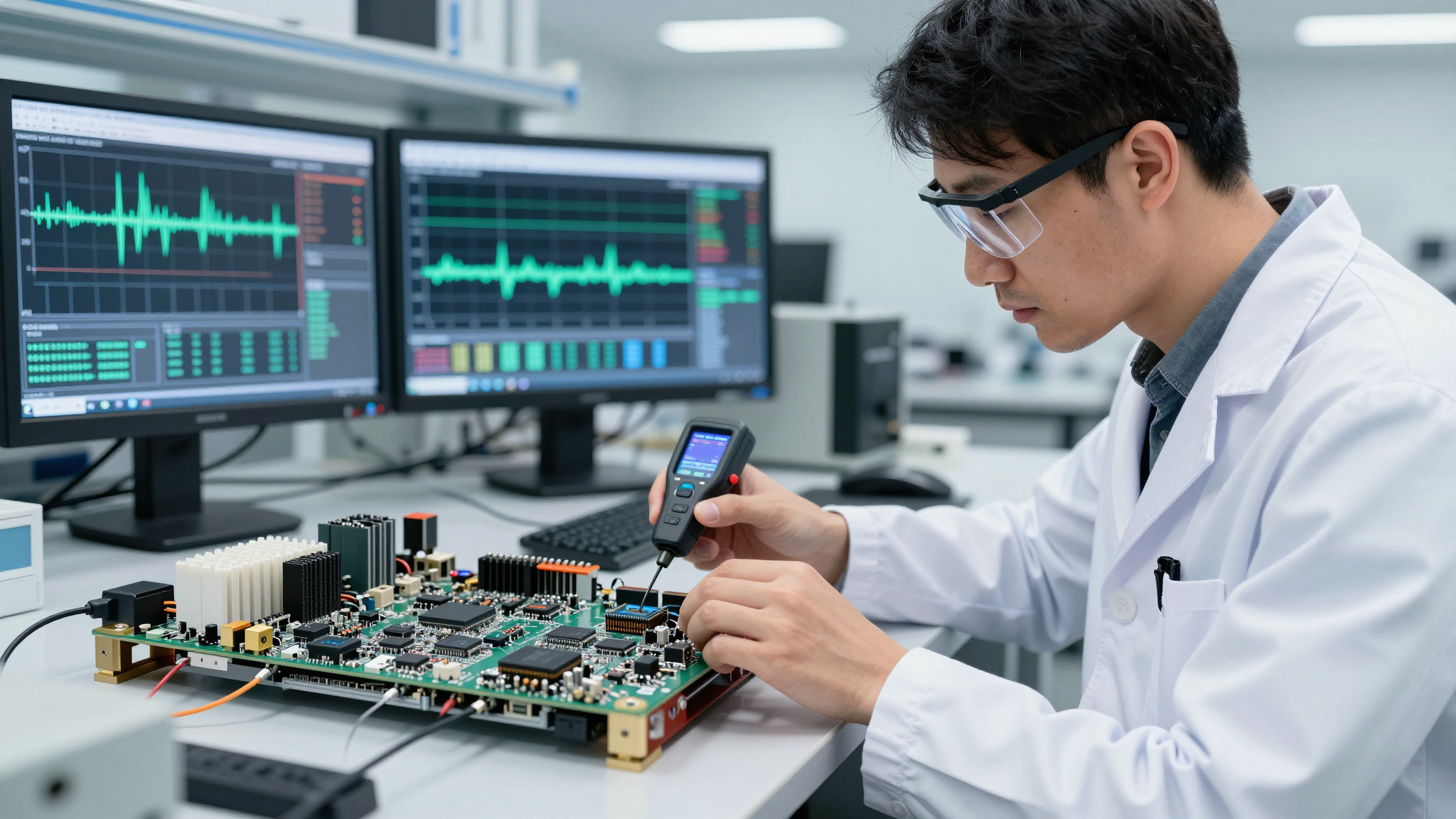

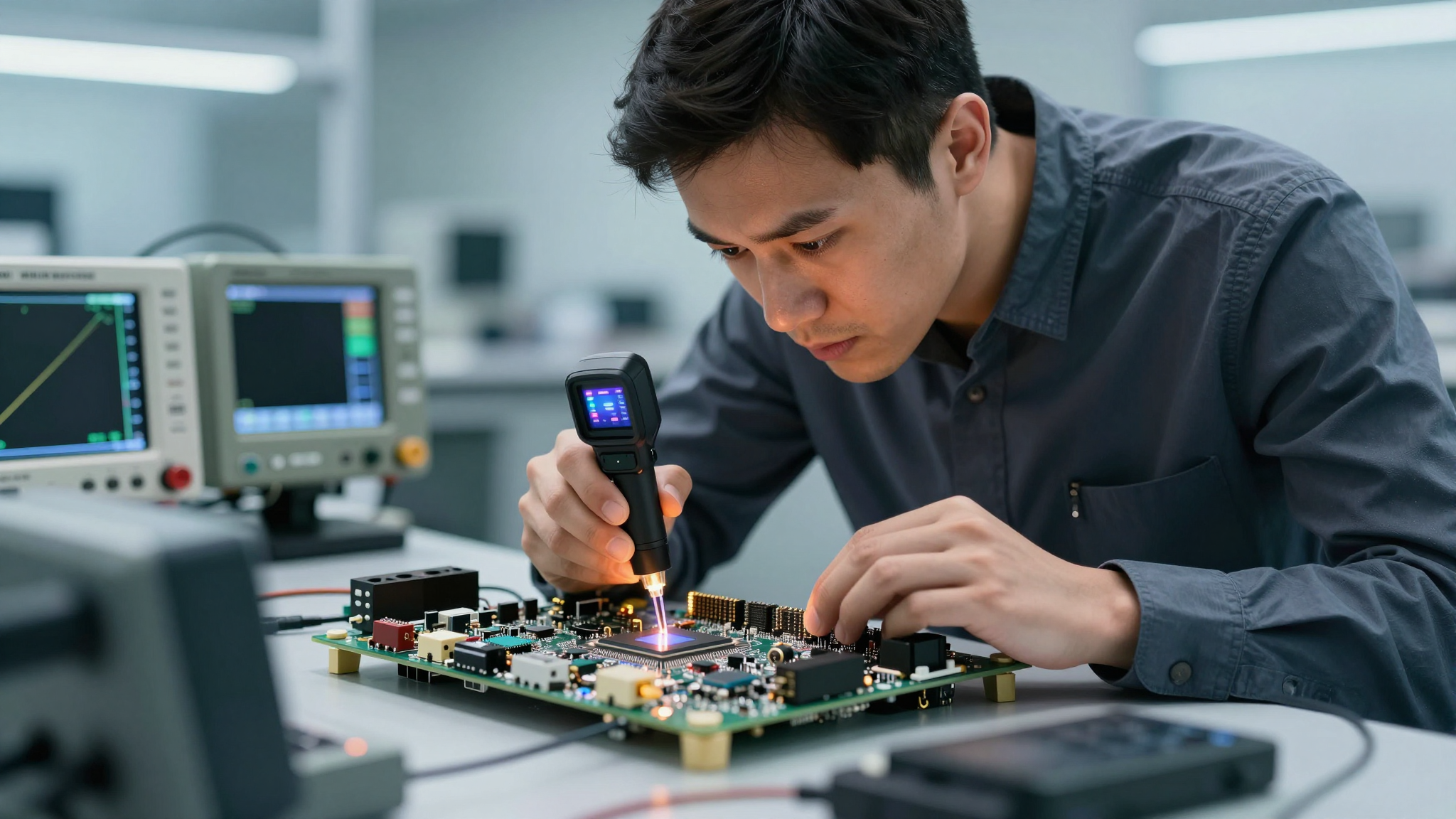

Alright, you've spotted a problem. Now it's time to break out the tools. In 2026, we're not just talking about a multimeter and a screwdriver anymore. We have sophisticated diagnostic software, thermal imaging cameras, and even AI-powered analysis tools that can help us pinpoint the root cause of NPU bottlenecks. Knowing how to use these tools effectively is essential.

Let's start with the basics. Thermal imaging is your friend. A good thermal camera can quickly identify hotspots on the NPU, indicating areas of excessive heat generation. This can be a sign of inefficient cooling, component failure, or even a manufacturing defect. I remember one time, we had a batch of NPUs that were consistently overheating. Thermal imaging revealed that the heat spreader wasn't making proper contact with the die in certain areas. It turned out to be a subtle manufacturing flaw that would have been impossible to detect without thermal imaging.

| Tool | Purpose | Benefits | Limitations |

|---|---|---|---|

| Thermal Imaging Camera | Identify hotspots and thermal inefficiencies | Non-invasive, quick identification of problem areas | Can be expensive, requires proper interpretation of data |

| Diagnostic Software | Run stress tests, monitor performance metrics, check for errors | Comprehensive testing, automated reporting | Can be time-consuming, may not detect all problems |

| Oscilloscope | Analyze electrical signals and identify anomalies | Precise measurements, detection of signal integrity issues | Requires specialized knowledge, invasive testing |

| Logic Analyzer | Capture and analyze digital signals | Debugging complex digital circuits, identifying timing issues | Requires specialized knowledge, invasive testing |

| AI-Powered Analysis Tools | Analyze diagnostic data and identify patterns that humans might miss | Automated analysis, faster troubleshooting | Relies on accurate data, can be prone to biases |

Don't underestimate the power of good old-fashioned visual inspection. Check for loose connections, damaged components, and dust accumulation. Dust is a surprisingly common culprit, especially in environments with poor ventilation. I've seen cases where dust buildup on the cooling fins reduced cooling efficiency by as much as 30%.

🚨 Critical Warning

Always disconnect power before performing any physical inspection or maintenance on your NPU. Static electricity can damage sensitive components.

Always disconnect power before performing any physical inspection or maintenance on your NPU. Static electricity can damage sensitive components.

Software Profiling and Optimization for Neural Processors

It's not always a hardware problem. Sometimes, the bottleneck lies in the software. Inefficient code, poorly optimized AI models, and driver conflicts can all contribute to NPU performance issues. Software profiling tools are essential for identifying these problems. These tools allow you to see how your AI models are utilizing the NPU's resources, pinpointing areas where optimization is needed.

One of the most common software bottlenecks is inefficient memory access. NPUs rely on fast memory to feed data to their processing cores. If your AI model is constantly accessing data from slow memory, it's going to slow down the entire system. The solution is to optimize your data structures and access patterns to minimize memory latency. This might involve rearranging your data in memory, using caching techniques, or even switching to a different memory technology altogether.

| Technique | Description | Benefits | Considerations |

|---|---|---|---|

| Model Pruning | Removing unimportant connections or neurons from the AI model | Reduces model size, improves inference speed, lowers memory requirements | Can impact model accuracy if not done carefully |

| Quantization | Reducing the precision of the model's weights and activations | Reduces memory footprint, improves performance on NPUs with limited precision support | Can lead to a slight decrease in model accuracy |

| Operator Fusion | Combining multiple operations into a single operation | Reduces overhead, improves performance | Requires specialized knowledge of the NPU architecture |

| Kernel Optimization | Optimizing the low-level code that executes on the NPU | Maximizes performance, unlocks the full potential of the NPU | Requires deep understanding of the NPU's instruction set |

| Driver Updates | Ensuring that the NPU drivers are up to date | Fixes bugs, improves performance, enables new features | Can sometimes introduce new problems |

Don't forget about driver updates. Outdated or incompatible drivers can cause all sorts of problems. Make sure you're always using the latest drivers recommended by the NPU manufacturer. And if you're experiencing driver conflicts, try rolling back to a previous version.

💡 Key Insight

Software bottlenecks can significantly impact NPU performance. Profiling tools and optimization techniques like model pruning and quantization are crucial for maximizing efficiency.

Software bottlenecks can significantly impact NPU performance. Profiling tools and optimization techniques like model pruning and quantization are crucial for maximizing efficiency.

Advanced Thermal Management and Power Delivery Solutions

As NPUs become more powerful, thermal management becomes increasingly critical. We're talking about chips that can generate hundreds of watts of heat in a small area. If you don't keep them cool, they'll throttle performance or even fail completely. In 2026, we have a range of advanced cooling solutions at our disposal, from liquid cooling to vapor chambers to exotic materials like graphene.

Liquid cooling is becoming increasingly popular, especially in high-performance applications. It's more effective than air cooling at removing heat, and it's relatively quiet. However, it's also more complex and expensive. You need to worry about leaks, corrosion, and proper maintenance. I remember one time, we had a liquid cooling system fail in a server farm. The coolant leaked onto the motherboard, causing a short circuit that took down the entire system. It was a total disaster. The lesson? Liquid cooling is great, but it requires careful planning and execution.

| Cooling Solution | Description | Benefits | Drawbacks |

|---|---|---|---|

| Air Cooling | Using fans and heatsinks to dissipate heat | Simple, inexpensive, reliable | Less effective at removing heat than other solutions, can be noisy |

| Liquid Cooling | Using liquid coolant to transfer heat away from the NPU | More effective at removing heat than air cooling, quieter | More complex, expensive, requires maintenance, risk of leaks |

| Vapor Chambers | Using a sealed chamber filled with a liquid that evaporates and condenses to transfer heat | Effective at spreading heat, relatively thin and lightweight | More expensive than air cooling, can be susceptible to damage |

| Thermoelectric Cooling (TEC) | Using the Peltier effect to transfer heat from one side of a device to the other | Can achieve very low temperatures, precise temperature control | Inefficient, generates a lot of waste heat |

| Immersion Cooling | Submerging the entire NPU in a dielectric fluid | Extremely effective at removing heat, allows for very high power densities | Complex, expensive, requires specialized equipment |

Power delivery is another critical aspect of thermal management. NPUs require stable and reliable power to operate efficiently. If the power supply is inadequate or the voltage fluctuates, it can lead to performance problems and even hardware damage. Make sure you're using a high-quality power supply that can meet the NPU's power demands. And check the voltage levels regularly to ensure they're within the specified range.

💡 Smileseon's Pro Tip

Consider using a redundant power supply to ensure continuous operation in case of a power supply failure.

Consider using a redundant power supply to ensure continuous operation in case of a power supply failure.

Addressing Memory Bandwidth and Latency Issues

We've touched on memory briefly, but it's so important that it deserves its own section. NPUs are incredibly memory-hungry. They need to be able to access vast amounts of data quickly to perform their calculations. Memory bandwidth and latency are two key metrics that determine how well an NPU can access memory. Bandwidth is the amount of data that can be transferred per unit of time, while latency is the time it takes to access a particular piece of data. Both are critical for NPU performance.

In 2026, we're seeing widespread adoption of High Bandwidth Memory (HBM) in high-performance NPUs. HBM is a type of memory that's designed specifically for applications that require high bandwidth. It's much faster than traditional DDR memory, but it's also more expensive. If you're working with an NPU that uses HBM, make sure it's properly configured and that the drivers are up to date. I had a situation last year where an engineer installed new HBM modules, but forgot to update the firmware. The NPU recognized the new memory, but it wasn't able to use it effectively. The performance was actually worse than before.

| Memory Type | Bandwidth | Latency | Cost | Typical Use Case |

|---|---|---|---|---|

| DDR5 | Moderate | Moderate | Low | General-purpose computing |

| HBM3 | Very High | Low | High | High-performance NPUs, GPUs |

| GDDR7 | High | Moderate | Moderate | Mid-range GPUs, some NPUs |

| Embedded SRAM | Extremely High (but limited capacity) | Very Low | Very High | On-chip caches, critical data storage |

| 3D-Stacked Memory | Very High | Low | High | Emerging technology, potential for future NPUs |

Even if you have plenty of bandwidth, latency can still be a problem. If your AI model is constantly accessing data from different parts of memory, the latency can add up. The solution is to optimize your data layout to minimize the distance between frequently accessed data points. This might involve using data structures that are optimized for locality of reference, or even using custom memory allocation schemes.

🚨 Critical Warning

Never mix different types of memory in the same system. This can lead to instability and performance problems.

Never mix different types of memory in the same system. This can lead to instability and performance problems.

Future Trends in Neural Processor Diagnostics and Maintenance

Looking ahead, the future of NPU diagnostics and maintenance is all about automation and AI. We're moving towards a world where NPUs can diagnose their own problems and even fix them automatically. AI-powered diagnostic tools will be able to analyze vast amounts of data to identify patterns and predict failures before they happen. Self-healing NPUs will be able to reroute data around damaged areas or even repair themselves using nanobots. It sounds like science fiction, but it's closer than you think.

One of the most exciting trends is the development of "digital twins" for NPUs. A digital twin is a virtual replica of a physical NPU that can be used to simulate its behavior under different conditions. This allows engineers to test new software, diagnose problems, and optimize performance without having to risk damaging the real NPU. I saw a demo of this technology at a conference in Munich last year, and I was blown away. They were able to simulate the effects of a thermal runaway in real-time, and then use the digital twin to develop a solution that prevented the actual NPU from failing. It was like something out of a movie.

| Trend | Description | Potential Benefits | Challenges |

|---|---|---|---|

| AI-Powered Diagnostics | Using AI algorithms to analyze diagnostic data and predict failures | Faster troubleshooting, reduced downtime, proactive maintenance | Requires large datasets, can

🔗 Recommended Reading

|